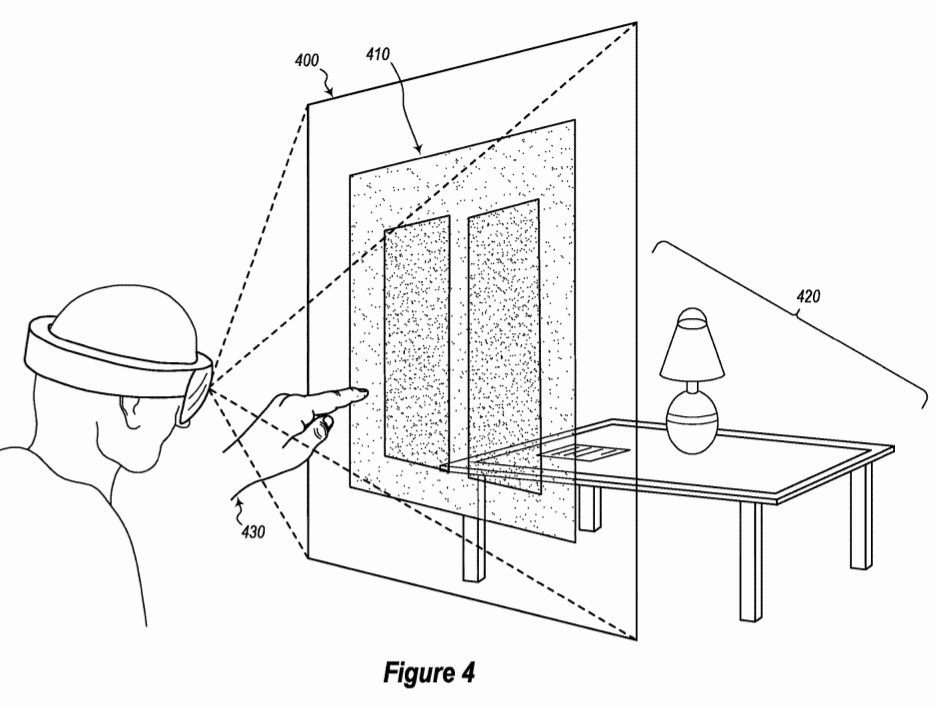

Microsoft’s latest patent points to an improved mixed-reality computer system which includes augmented-reality systems and virtual-reality systems. In the patent application, Microsoft has described a system which uses tracking to simulate the tablet interaction in the mixed reality world.

We spotted a patent titled “USING TRACKING TO SIMULATE DIRECT TABLET INTERACTION IN MIXED REALITY”. This patent was published on 29th November by USPTO and filed by the Redmond-based Microsoft in May 2017.

The background section of the patent application describes the trend of the mixed reality devices and the problems the users face. The background section describes the challenge and problem:

“As alluded to above, immersing a user into an augmented-reality environment creates many challenges and difficulties that extend beyond the mere presentation of a scene to a user. For instance, conventional augmented-reality systems are deficient in how they receive user input directed at a virtual object, such as a virtual tablet or touch screen. Among other things, conventional systems lack functionality for guiding and assisting the user when she is targeting a location to actually enter input on a virtual display or touch surface. Accordingly, there exists a strong need in the field to improve a user’s interactive experience with virtual objects in an augmented-reality scene,” Microsoft explains in the background section.

Microsoft’s proposed solution is, of course, written in the patent application’s summary and detailed description section:

“Optimizations are provided for facilitating interactions with virtual objects included within an augmented-reality scene. Initially, an augmented-reality scene is rendered for a user. Within that scene, an interactive virtual object of an application is rendered. Then, the position of the user’s actual hand is determined relative to the interactive virtual object. When the user’s actual hand is within a target threshold distance to the interactive virtual object, then a target visual cue is projected onto the interactive virtual object. When the user’s actual hand is within an input threshold distance to the interactive virtual object, then an input visual cue is projected onto the interactive virtual object. Once the user’s hand is within the input threshold distance to the interactive virtual object, then input may be provided to the application via the interactive object,” Microsoft explains.